Projects

I've taken part in a lot of projects over the years. I've listed my favorites below.Chess Transformers

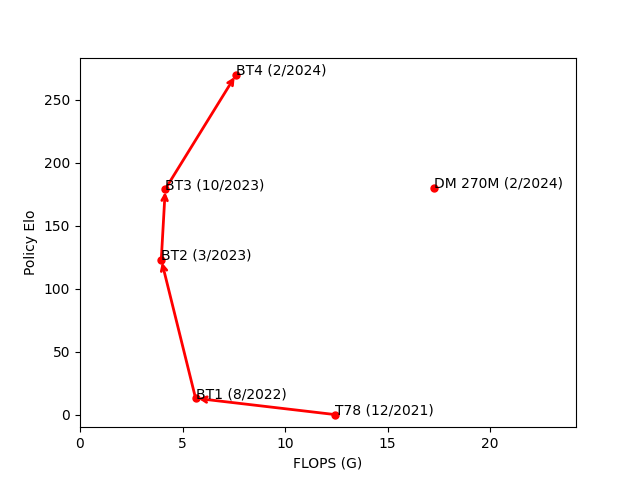

Since 2022 I have been working on the transformer models used by Leela Chess Zero to guide search.

My main contribution was a position encoding that enables models to play as if 2.5x larger with a ~15% latency bump.

The latest architecture iteration can at a scale of 190 million parameters produce a policy that is competitive with grandmasters.

Modern GPUs can evaluate this model thousands of times per second, allowing the engine to effectively emulate the strength of thousands of grandmasters.

A weaker feat in the same vein was achieved independently and concurrently in

a 2024 paper by DeepMind

with an agent that evaluates

a 270-million parameter model for each legal move. Our strongest model outperforms DeepMind's with 30x less computation.

This architecture has also been the subject of a NeurIPS article and two preprints.

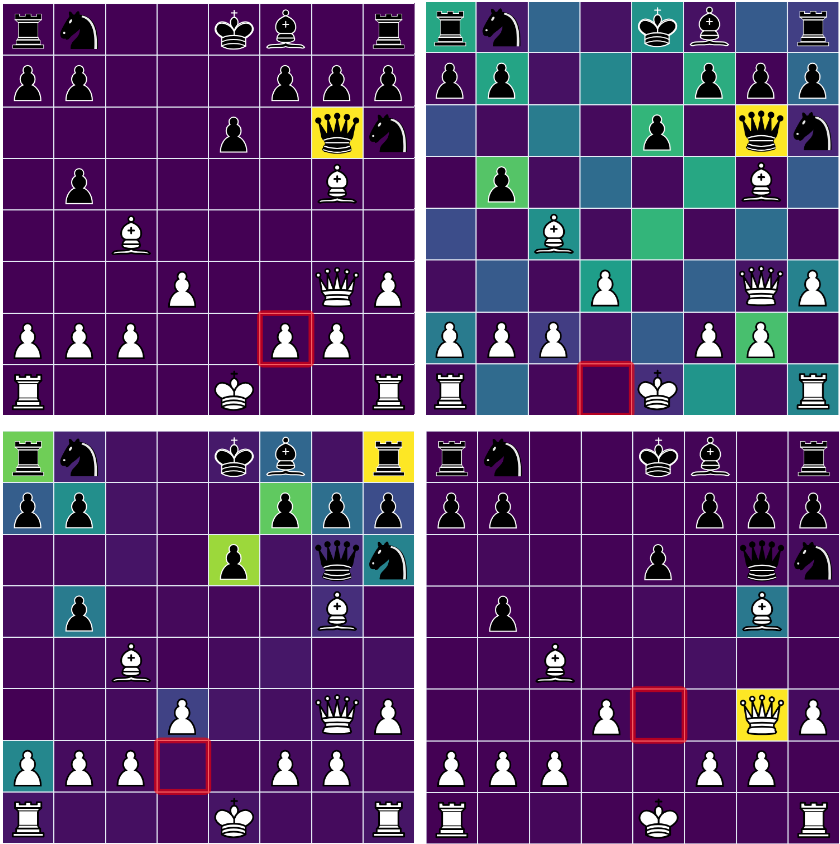

One of the most interesting discoveries was an attention head which transmits information from the "to" square of the player's predicted follow-up move to the "to" square of the player's next move.

Each of my testing runs took a week or two on an A100 GPU, and training of full models took on the order of months on a cluster of 8 A100s.

All my work was in Tensorflow, and models were trained in the supervised setting on datasets of billions to tens of billions of positions

generated by prior reinforcement learning runs.

Some of the improvements I made to search include uncertainty weighting, which was first proposed in Go and allows the engine to put more

effort into positions the neural network reports uncertainty about, gaining 5 Elo;

and a scheme for sometimes reusing evaluations for positions that differ only in the 50-move rule count, gaining around 10 Elo.

Versions of Leela equipped with a Chessformer model and these search improvements defeated the reigning champion, Stockfish, at the TCEC Cup 11 and TCEC Swiss 6 and 7 championships.

See

my blog post and

our preprint. We have a newer submission under review.

Stockfish Development

Since 2024 I have been involved in the development of Stockfish, which is widely considered the strongest chess engine in existence. I have commited to the official Stockfish repository 60 times. Some of my favorite improvements, each of which gained Elo, follow:

- I implemented an algorithm in cupy to increase the "block sparsity" of Stockfish's neural network. The idea was to iteratively calculate the change in sparsity from swaps of neurons as a matrix multiplication between 0-1 matrices, which can be done rapidly on Nvidia's Tensor Cores. This was turned into a command-line utility by another contributor. The speedup varied from 0.9% to 2.5% depending on the system.

- I had a series of three Elo gainers in the "prior countermove bonus", which updates the scores of moves in our history tables when the opponent's move was strong enough that it pushed us below search bounds. My improvements included allowing the multipliers in the bonus to be fractional and thus tunable (another contributor ran the tune for me). This allowed me to make a term which increases the bonus if we initially underestimated the opponent's move gradually increase with that underestimation. I also implemented a variant of the prior countermove bonus for captures since Stockfish has separate history tables for quiet moves and captures.

- Stockfish has a feature called "late move reduction" where it does a search with a reduced depth for moves that it considers unlikely to be the best move. I implemented two heuristics adjusting the depth based on how the evaluation changes when the move is played.

- Stockfish will stop searching a position if it has searched the position before with a higher depth and the search would fail based on that result. If there is a best move stored, I tell the engine to look up the entry for the position after that move and make sure that evaluation would still trigger the cutoff.

- Stockfish has a series of "correction tables" it uses to keep track of the error in its previous evaluations. Using the fact that the magnitude of the correction roughly represents the uncertainty in the evaluation, I made it more difficult for the engine to prune positions that have a large correction.

Vdwnumbers.org Distributed Computing Project

In 2015 I created a distributed computing project in BOINC to calculate lower bounds for a combinatorial object called a van der Waerden number

using on a construction based the discrete logarithm modulo a prime. This started out as a way to get acquainted with Unix and C++ and to combine the computing power

of a few laptops into an interesting project, but the project soon grew to include over 500 users in 90 countries. We discovered around a dozen new bounds,

leading to a paper.

The infrastructure basically consisted of a server running a MySQL database to communicate with clients running a 200-line C++ program through BOINC.

The server assigned ranges of integers to clients, and the clients returned the minimum length of the

longest arithmetic progression in a coloring corresponding to each prime in that range.

Two clients validated each other's results and sent their results to the server, which updated the table of bounds in real time.

I revamped the project in high school to focus on the two-color case of the problem, which allowed packing bits and reducing the memory requirement by a factor of 8.

This allowed pushing the upper limit of the primes checked from a billion to 4 billion.

Chess Engine Youtube Channel

I recently noticed that there was a lot of interest in projects like Stockfish and Lc0, with videos about games between these engines garnering hundreds of thousands of views. However, there are plenty of misconceptions about how these engines work. I make videos about the development of these engines, with a focus on my work. The channel has ~4 thousand subscribers and over a hundred thousand views. These videos have led several new contributors to Stockfish.